Wheat from the chaff: extracting, transforming, and loading data for Open Targets

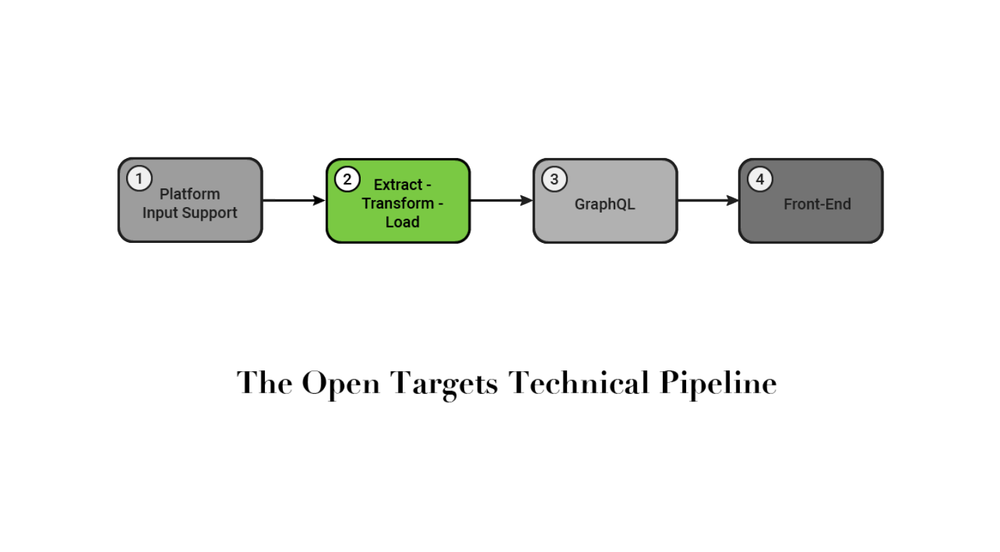

The second part of our four-part series discussing the Open Targets Platform explores our Extract-Transform-Load (ETL) pipeline.

The ETL is the weft to the warp provided by the Platform-Input-Support project covered in our last blog post; pulling together the many inputs into the fabric of the Open Targets platform.

The ETL is written in Scala and uses Spark as an analytics engine. We run the ETL using Google Cloud Computing's Dataproc service which allows us to focus on our business logic and not the details of maintaining and configuring the computing environment. As an open source project, you can see our inputs, outputs, and the jar file to run the ETL on your own cluster by accessing the necessary resources from Open Targets' cloud storage. The outputs of the ETL are made available via FTP, Google's BigQuery, a GraphQL API, and the Open Targets Platform (see the documentation for how to access these resources).

We're in the final phases of migrating our ETL from Python to Scala and Spark. The motivation for the migration was that the old data pipeline proved too slow for the quantity of data and intensity of processing that were required to meet the goals of Open Targets. Having a pipeline that took upwards of 18 hours to run discouraged experimentation and iteration as the feedback loop was very slow.

Working as a team of bioinformaticians, scientists and software developers means that we want to be able to test our new ideas quickly, while remaining robust quality requirements. To support these competing priorities the pipeline is designed as a series of parameterised steps which can be run (mostly) independently. On the data side this allows us to interate quickly as we can run only a portion of the ETL and individual steps are typically quite fast (~5 minutes using a full dataset). The software engineering side, a strongly typed language such as Scala lets us change the ETL with confidence which parts of the output data may be impacted thereby maintaining robustness.

The entire ETL can be conceived of as a directed acyclic graph (DAG). An important enhancement yet to be implemented is to automatically run related steps so any single step can be run without having to have any knowledge of dependencies between steps. This is not a new problem in computer science, so presently the biggest challenge is choosing between the many possible implementatations ranging from a Java library such as jgrapht, using SBT's native task graph resolution, or specifying a Dataproc workflow.

The data generated by the ETL is used as the foundation for the newly updated and terrific looking Open Targets Platform which is the topic of the final two blog posts.